The OAIS Reference Model and Its Implementation Gap

The Open Archival Information System reference model, published by the Consultative Committee for Space Data Systems and adopted as ISO 14721, provides the conceptual framework within which most serious digital preservation programs operate. OAIS defines the functional components of a preservation system — ingest, archival storage, data management, administration, preservation planning, and access — and describes the information packages that flow between them. It is the closest thing the field has to a shared architecture.

The implementation gap between OAIS as a reference model and OAIS as a deployed system in real institutions is significant and, in much of the literature, underdiscussed. The model describes what a preservation system should do; it does not specify how to do it with constrained budgets, legacy infrastructure, and staff trained primarily in traditional archival practice rather than digital systems. The University at Buffalo Libraries Special Collections uses Preservica — an OAIS-compliant workflow suite — for its born-digital management, with primary strategy centered on normalising files for preservation and presentation. This is a representative model for well-resourced academic libraries. It is not representative of the field as a whole, where many institutions lack the staff, software, or storage infrastructure to implement OAIS in any meaningful sense.

NARA's 2022–2026 strategy mandates tools for forensic identification and format characterisation, including file format identification, format validation against documented specifications, and technical metadata extraction. This is the correct approach — a preservation system that does not know what it holds cannot prioritise its interventions. But building and maintaining those tools across a collection of tens of millions of records, acquired across decades, in formats ranging from standardised to idiosyncratic, is an engineering problem of genuine complexity that the strategy document acknowledges without fully resolving.

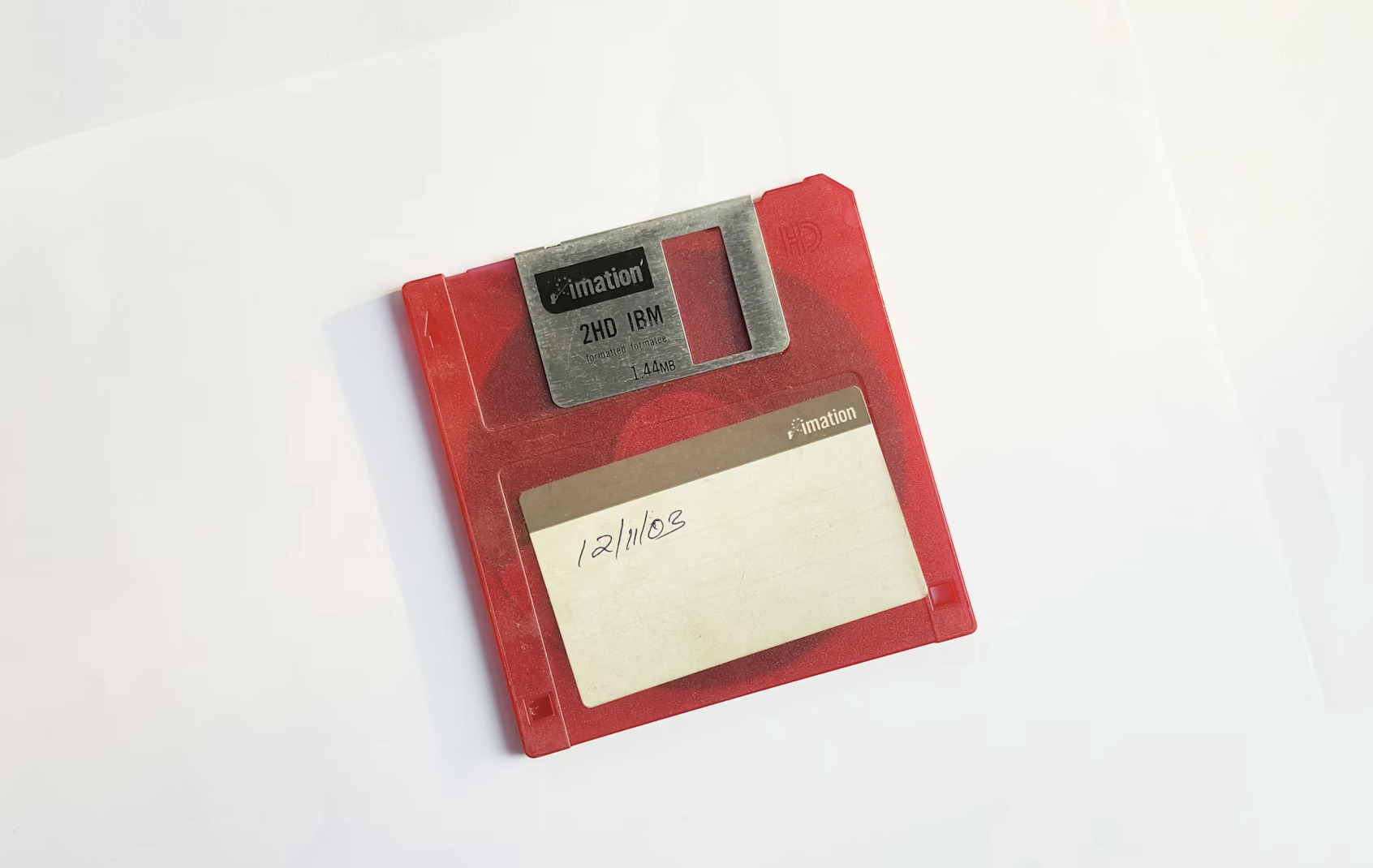

The Proprietary Format Problem

The field's consensus position on file format selection — that chosen formats should be "open, standard, non-proprietary, and well-established" — is correct and widely endorsed. It is also almost entirely retrospective in its application. The archives that most urgently need preservation attention are not archives of material created in open formats; they are archives of material created in whatever formats were standard at the time, which for most of the personal computing era meant Microsoft Word, Excel, PowerPoint, various versions of WordPerfect, Adobe's proprietary formats, and dozens of specialist applications in scientific, legal, and creative domains.

The wide adoption of proprietary file formats has created a situation in which only the program that created the file — or in some cases, only a specific version of that program — can be used to open it correctly. Format documentation, when it exists, is often incomplete, proprietary, or subject to licensing restrictions that complicate open implementation. The result is that a significant portion of the born-digital cultural record of the last four decades exists in formats whose full specification is controlled by companies that may not prioritise preservation and whose business decisions can render those formats unreadable overnight.

What the Next Decade Requires

The field has diagnosed the problem accurately. What it has not done is build the institutional infrastructure to address it at scale.

Three things are needed that the current state of the field does not reliably provide. First, format risk assessment capacity — the ability to survey a collection and identify which formats are at greatest risk of imminent obsolescence, so that preservation resources can be allocated to the highest-priority materials before access is lost. PRONOM, the UK National Archives' file format registry, provides the technical infrastructure for this; deployment of format assessment tools against real collections at real institutions remains inconsistent.

Second, shared emulation infrastructure — the capacity to run original software environments without requiring each institution to build and maintain its own emulation stack. The Software Preservation Network in the United States and the Software Heritage initiative in Europe represent steps in this direction. They are not yet at the scale the problem requires.

Third, and most difficult: honest institutional accounting of the gap between stated preservation commitments and actual preservation capability. An institution that holds born-digital materials and lacks the staff, storage, and software to implement even basic preservation workflows is not preserving those materials, regardless of what its collection policy says. Closing that gap requires resources that acquisitions decisions do not currently account for and that institutional leadership has not consistently prioritised.

The quiet emergency is not quiet because it is small. It is quiet because bits, unlike books, do not visibly deteriorate. They simply stop being readable. And by the time an institution discovers that a format has become obsolete, the moment for cost-effective intervention is often already past.